What is content aggregation and how does it work?

Content aggregation is the process of collecting, compiling, and organizing content from multiple sources into a single, unified location. It involves pulling together articles, data, product listings, news, or other structured information from across the web or internal systems, then presenting that content in a consistent, usable format. Businesses use content aggregation to monitor markets, power search tools, build data feeds, and keep users informed without requiring them to visit dozens of separate sources.

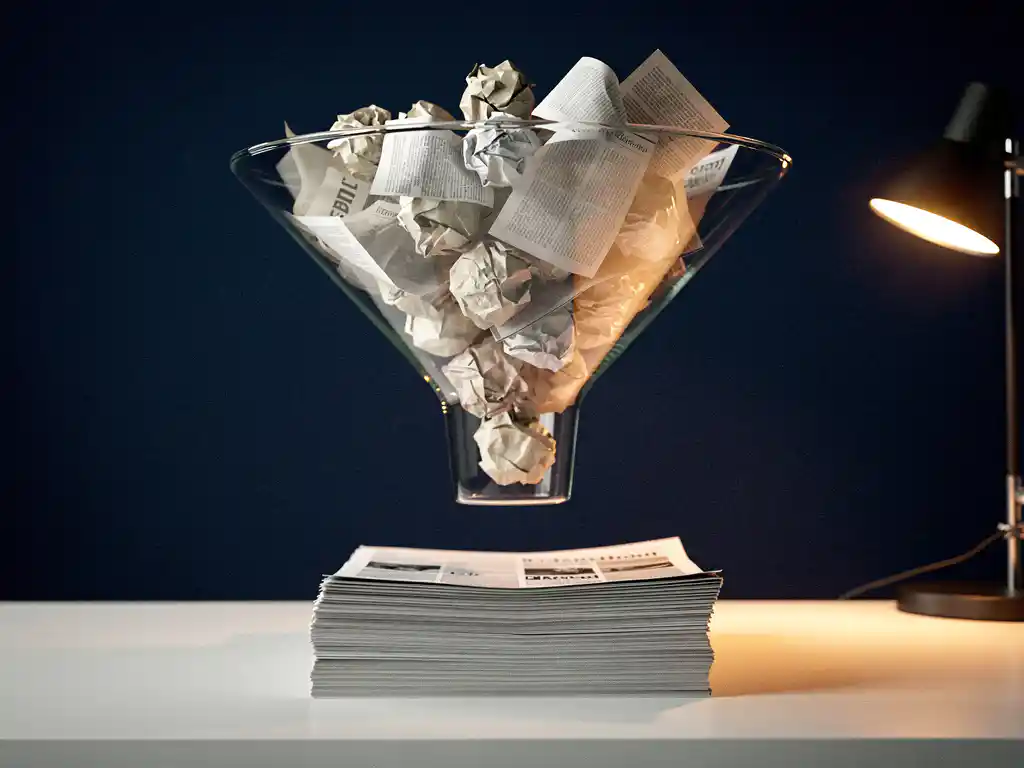

Scattered data sources are slowing down your decision-making

When relevant information lives across dozens of websites, databases, or internal systems, the time it takes to gather and compare it adds up fast. Teams end up doing manual research that should be automated, and by the time the data is compiled, it may already be outdated. The fix is straightforward: replace manual collection with a structured aggregation process that pulls from all your sources on a schedule, normalizes the output, and delivers it where your team or application actually needs it. That shift alone removes a significant bottleneck from data-driven workflows.

Relying on a single data source is holding back your search quality

Search tools and recommendation engines that draw from only one or two data sources produce narrow, incomplete results. Users notice when results feel thin or miss obvious matches, and that erodes trust quickly. Aggregating content from multiple sources broadens the index your search tool works from, which directly improves result relevance and coverage. The practical step here is to identify every source your users would reasonably expect results from, then build or adopt an aggregation pipeline that keeps those sources synchronized and searchable.

What is content aggregation and why does it matter?

Content aggregation is the automated collection of content or data from multiple sources, combined into a single structured dataset or feed. It matters because modern applications, search tools, and business intelligence platforms depend on comprehensive, up-to-date information. Manually gathering that information at scale is not practical, and aggregation makes it possible to work with large, diverse datasets efficiently.

For businesses, the value is concrete. An e-commerce platform that aggregates product data from multiple suppliers can offer broader inventory without managing each source manually. A market research firm that aggregates news and pricing data across industries gets a fuller picture than any single feed provides. A government portal that aggregates content from multiple departments gives citizens one place to find answers.

Content aggregation also feeds directly into search functionality, recommendation engines, and monitoring dashboards. Without it, these systems either work from incomplete data or require constant manual input to stay current.

How does content aggregation actually work?

Content aggregation works by using automated tools to fetch content from defined sources, parse and extract the relevant fields, normalize the data into a consistent structure, and store or deliver it to a target system. The process typically runs on a schedule or in response to triggers, so the aggregated dataset stays current without manual intervention.

The core steps in a content aggregation pipeline are:

- Source identification: Define which websites, APIs, RSS feeds, or databases contain the content you need.

- Crawling or fetching: Automated crawlers or API calls retrieve the raw content from each source.

- Parsing and extraction: The relevant fields, such as titles, prices, dates, or body text, are extracted from the raw HTML or data response.

- Normalization: Extracted data is cleaned and converted into a consistent format so records from different sources can be compared or combined.

- Storage and indexing: The structured data is stored in a database or search index, ready for querying or delivery.

- Delivery or presentation: The aggregated content is served to an application, dashboard, or end user through an API, feed, or search interface.

The complexity of each step depends on how consistent the source content is. Well-structured APIs are easier to aggregate than unstructured web pages, which require more sophisticated parsing logic to extract meaningful data reliably.

What are the main types of content aggregation?

The main types of content aggregation are news aggregation, product data aggregation, social media aggregation, RSS feed aggregation, and enterprise data aggregation. Each type differs in its source structure, update frequency, and how the aggregated output is used downstream.

- News aggregation: Collects articles and headlines from multiple publications, useful for media monitoring and content curation platforms.

- Product data aggregation: Gathers product listings, prices, and availability from multiple retailers or suppliers, commonly used in e-commerce and price comparison tools.

- RSS feed aggregation: Pulls structured content updates from websites that publish RSS or Atom feeds, a relatively straightforward form of aggregation.

- Social media aggregation: Compiles posts, mentions, and engagement data from social platforms, typically via official APIs.

- Enterprise data aggregation: Combines data from internal systems such as CRMs, ERPs, and databases into a unified view for analytics or search.

- Real estate and financial data aggregation: Collects listings, valuations, or market data from multiple sources to power specialized search and analysis tools.

What's the difference between content aggregation and web scraping?

Content aggregation is the broader process of collecting and combining content from multiple sources. Web scraping is one specific technique used within that process. Scraping extracts data from web pages by parsing their HTML. Aggregation describes the full pipeline: sourcing, extracting, normalizing, and delivering the combined dataset.

In practice, web scraping is often a component of a content aggregation workflow, particularly when the target sources do not offer structured APIs or feeds. When a source does provide an API, aggregation can happen without scraping at all.

The distinction matters because web scraping carries its own technical and legal considerations, such as handling dynamic JavaScript-rendered pages, managing rate limits, and respecting robots.txt directives. Aggregation as a concept is source-agnostic. Scraping is a specific method for extracting data from web pages that do not offer a more structured access point.

What tools and technologies power content aggregation?

Content aggregation relies on web crawlers, data parsers, search indexing engines, and pipeline orchestration tools. Common technologies include Apache Nutch for large-scale crawling, Apache Solr and Elasticsearch for indexing and search, and custom-built APIs for normalized data delivery. The right stack depends on source volume, update frequency, and how the data is used.

For smaller-scale aggregation, RSS readers and lightweight scraping libraries handle the job. For enterprise-scale pipelines that process millions of URLs across many sources, distributed crawling frameworks and search engines built on Lucene-based technology become necessary. Apache Hadoop is often used alongside Nutch for processing large crawl datasets.

The delivery layer matters as much as the collection layer. Aggregated data needs to reach its destination in a usable format, whether that is a JSON API, a search index, a structured database, or a real-time feed. Many aggregation setups also include deduplication logic to prevent the same content from appearing multiple times when it is published across several sources.

How do you ensure content aggregation stays legal and ethical?

Legal and ethical content aggregation requires respecting robots.txt files, honoring rate limits, complying with a website's terms of service, and following applicable data privacy regulations such as the GDPR. Aggregating publicly available content is generally permissible, but how that content is stored, processed, and republished determines whether the activity remains compliant.

Key practices for staying on the right side of the law include:

- Check robots.txt: This file specifies which parts of a site crawlers are allowed to access. Ignoring it creates legal and reputational risk.

- Review terms of service: Many websites explicitly restrict automated data collection. Violating these terms can result in legal action.

- Respect rate limits: Aggressive crawling can overload servers. Throttle your requests to avoid disrupting the source site.

- Handle personal data carefully: If aggregated content includes personal information, GDPR and similar regulations apply. Establish a lawful basis for processing and limit retention periods.

- Attribute content appropriately: When republishing aggregated content, link back to the original source to respect copyright and give proper credit.

Ethical aggregation also means being transparent about what data you collect and why. Organizations operating under strict compliance requirements, such as those in finance or government, should document their aggregation practices and review them regularly as regulations evolve.

How Openindex helps with content aggregation

We specialize in building and managing content aggregation pipelines for organizations that need reliable, structured data at scale. Whether you need to aggregate product data, news content, real estate listings, or internal enterprise data, we handle the full pipeline from crawling to delivery. Our solutions are built on proven open source technologies including Apache Solr, Elasticsearch, and Apache Nutch, and are designed to scale with your data volume.

Here is what we offer:

- Crawling as a Service: We manage the entire crawling process so you receive clean, structured data without worrying about infrastructure or maintenance.

- Custom aggregation pipelines: Tailored to your specific sources, data formats, and delivery requirements.

- Search integration: Aggregated content can be fed directly into a search engine that is deployable on your website with a single line of JavaScript.

- GDPR-compliant data collection: We build aggregation workflows that respect legal requirements from the ground up.

- API delivery: Receive your aggregated data as a structured feed or API, ready to integrate into your application.

If you are ready to stop managing scattered data sources manually and want a scalable aggregation solution built for your needs, get in touch with us and we will work out the right approach together.